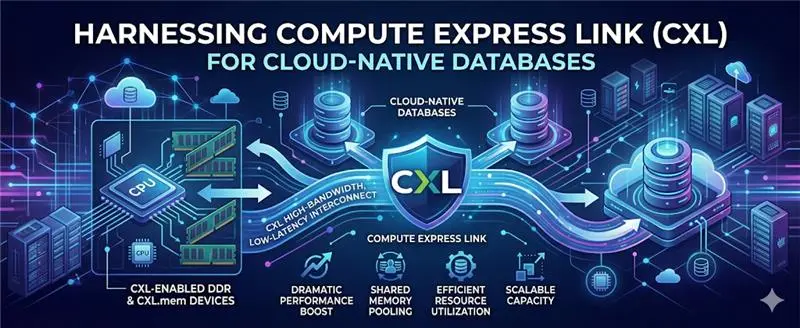

As data volumes explode and real-time applications become the norm, traditional infrastructure is struggling to keep up. Cloud-native databases demand high throughput, low latency, and flexible scalability, but conventional memory and storage architectures often create the very bottlenecks that hold them back. Compute Express Link (CXL) is emerging as the innovation that changes this equation.

What Is CXL and Why Does It Matter?

Compute Express Link is a high-speed, CPU-to-device interconnect built on PCIe that enables processors, memory expanders, and accelerators to share memory efficiently. Unlike traditional architectures, in which memory is tightly bound to a single server, CXL introduces memory pooling and sharing, allowing resources to be dynamically allocated across systems.

For cloud-native databases, this means faster access to larger memory pools, meaningfully reduced latency, and improved workload performance at scale. It is, in short, a rethinking of how compute and memory relate to one another.

The Problem with Traditional Database Architectures

Modern databases, particularly distributed and cloud-native systems, operate under a set of constraints that conventional infrastructure was never designed to resolve.

Memory is limited per node, which restricts performance for in-memory workloads regardless of how capable the compute layer is. Moving data between storage and compute introduces latency that compounds across queries and transactions. Memory sits underutilised across servers because it cannot be shared dynamically, leading to systemic inefficiency. And scaling typically means duplicating infrastructure wholesale rather than optimising what already exists.

Each of these constraints has a direct impact on performance, cost, and an organisation’s ability to scale with confidence.

How CXL Transforms Cloud-Native Databases

Memory Pooling for Elastic Scaling

CXL enables shared memory pools across multiple servers, allowing databases to dynamically access additional memory without being constrained by the limits of a single machine. The result is better scalability, reduced hardware waste, and meaningfully improved performance for memory-intensive queries without the overhead of over-provisioning.

Lower Latency, Faster Query Execution

By reducing the need to move data between storage and compute, CXL minimises latency at the architectural level. Databases can access large datasets directly from pooled memory, translating into faster query response times and a noticeably improved experience for end users and downstream applications alike.

Disaggregated Infrastructure

CXL supports disaggregated architectures, where compute, memory, and storage are separated but connected efficiently rather than bundled together by necessity. This gives infrastructure teams genuine flexibility, the ability to add memory without adding compute, or scale compute without touching storage. Resources follow workload demands, not the other way around.

Improved Cost Efficiency

Rather than over-provisioning servers to handle peak workloads, organisations using CXL can draw on shared memory resources as needed. This shifts the economics of database infrastructure significantly, with lower capital expenditure, better utilisation rates, and a more direct relationship between resource consumption and actual workload.

Enhanced Support for AI and Analytics

Cloud-native databases increasingly power AI and machine learning workloads alongside real-time analytics, both of which require substantial memory bandwidth and minimal latency. CXL enables high-performance analytics and faster model processing directly within the database environment, removing a layer of complexity that has historically slowed these workloads down.

Real-World Applications

The practical implications of CXL span a wide range of environments. In-memory databases such as SAP HANA and Redis benefit from faster processing at scale. Real-time analytics platforms gain the ability to surface instant insights from streaming data without performance degradation. Multi-tenant SaaS platforms can share resources efficiently across clients without compromising isolation or reliability. And AI-driven applications gain seamless integration between their database and model inference workloads, a boundary that has historically been costly to bridge.

Challenges to Consider

CXL’s potential is significant, but adoption is still maturing, and it would be misleading to overlook the practical considerations involved.

The hardware ecosystem requires compatible CPUs, memory devices, and supporting infrastructure, none of which are universally available today. Databases themselves need to be redesigned or substantially optimised to leverage memory pooling effectively; simply deploying CXL-capable hardware without addressing the software layer will not unlock the full benefit. And as with any meaningful architectural shift, the initial investment can be substantial.

That said, as hyperscalers and hardware vendors accelerate their adoption of the standard, these barriers are expected to reduce rapidly. The direction of travel is clear; the question for most organisations is one of timing, not whether.

The Future of CXL in Cloud Databases

CXL is positioned to redefine how cloud-native databases are built, scaled, and operated. As adoption grows across the industry, the consequences will be far-reaching: fully memory-centric database architectures that treat pooled memory as a first-class resource; deeper integration with AI and data analytics platforms; more efficient multi-cloud and hybrid deployments; and a reduced reliance on traditional storage hierarchies that have long been a source of latency and inflexibility.

The trajectory points toward databases that are not just faster, but fundamentally more adaptive, capable of responding to workload demands in ways that today’s architectures simply cannot support.

Final Thoughts

Cloud-native databases sit at the heart of modern digital businesses, and their performance depends heavily on how efficiently data is accessed and processed. Compute Express Link breaks the memory constraints that have quietly limited efficiency for years, enabling dynamic, high-speed resource sharing that scales with real workloads rather than theoretical peaks.

For organisations serious about staying competitive, embracing CXL-driven architectures is not simply an infrastructure upgrade; it is a strategic decision about where database performance goes next.