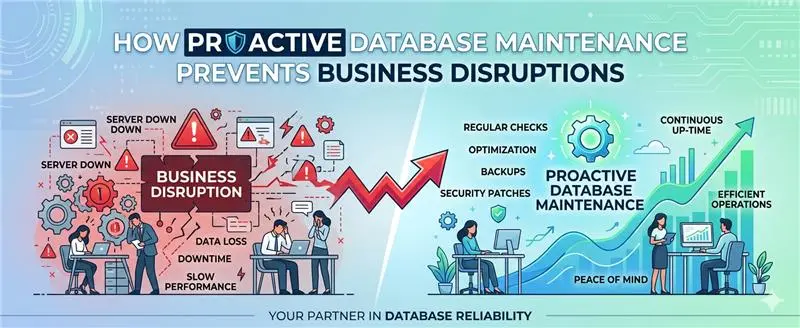

Your database broke at 2:47 AM. You found out at 9:15 AM. That seven-hour gap? It costs you more than downtime; it costs you trust.

This is the uncomfortable truth that most UK businesses are still living with. For decades, database management has operated on a fundamentally flawed model: wait for something to go wrong, then fix it. Alerts fire after the damage is done. Engineers scramble through logs after the pipeline has already collapsed. Post-mortems are written for failures that were, in hindsight, entirely preventable.

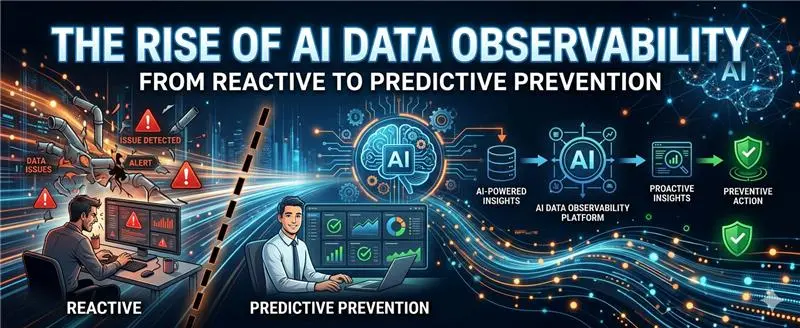

But the rules are changing. AI-powered data observability is rewriting the playbook, shifting organisations from firefighting to foresight, from reactive patching to predictive prevention.

The Limits of “Good Enough” Monitoring

Traditional database monitoring tools were built for a simpler era. They watch predefined thresholds for CPU at 90%, disk at 80% and shout when those lines are crossed. The problem is that by the time a threshold is breached, you’re already in trouble.

For UK enterprises operating in a heavily regulated environment, navigating GDPR compliance, FCA data integrity requirements, and the expectations of a digitally sophisticated customer base, “good enough” monitoring is no longer fit for purpose. A retail bank that discovers a data pipeline failure after its morning batch run has already missed its regulatory reporting window. A logistics firm that catches a database anomaly after order fulfilment has gone wrong has already disappointed its customers.

The reactive model doesn’t just create technical debt. It creates business risk.

What AI Observability Actually Means

Data observability is not simply better monitoring. It is a fundamentally different philosophy.

Where monitoring asks “has something broken?”, observability asks “is something about to break, and why?” It provides continuous, contextual awareness of your data’s health its freshness, completeness, distribution, schema integrity, and lineage, not just at the moment of failure, but at every point in its journey.

AI elevates this further. Machine learning models trained on your specific data patterns can identify anomalies that no human-defined threshold could anticipate. They detect the subtle drift in a dataset before it cascades into a pipeline failure. They correlate seemingly unrelated signals, a slight increase in query latency here, an unusual spike in null values there, and surface the story those signals are telling before it becomes a crisis.

This is the shift from reactive to predictive: from responding to what has happened, to preventing what is about to.

The UK Context: Why This Matters More Here

British businesses face a distinct set of pressures that make AI observability not just advantageous, but increasingly essential.

GDPR carries teeth in the UK that are felt nowhere more acutely than in data management. Inaccurate, incomplete, or corrupted data, the very conditions that poor observability allows to fester, can constitute a compliance failure before anyone has noticed. The Information Commissioner’s Office does not accept “we didn’t know” as a defence.

Equally, the UK’s financial services sector operates under some of the strictest data governance requirements in the world. When the FCA expects real-time accuracy in transaction data and reporting pipelines, reactive database management is simply incompatible with regulatory reality.

Beyond compliance, there is the competitive dimension. British consumers and B2B clients alike have little patience for data-related failures. Whether it is a broken checkout experience caused by a degraded product database or an incorrect client report stemming from a silent ETL failure, the reputational cost lands fast.

From Alert Fatigue to Actionable Intelligence

One of the most underappreciated problems in database teams today is not the absence of data but the overwhelming volume of it. Engineers are drowning in alerts, many of which are noise. This alert fatigue means that the genuinely critical signal often gets buried beneath a flood of low-priority notifications.

AI observability systems address this by doing something traditional monitoring cannot: learning the difference between what is normal for your environment and what is genuinely anomalous. They understand that a query volume surge on a Monday morning is expected behaviour, not a threat. They know that your end-of-month reporting workload looks different from your daily operations. Context, not just thresholds, becomes the basis for intelligence.

The result is fewer alerts, but better ones. Engineers are directed to the right problem at the right time and increasingly, before the problem has materialised.

Predictive Prevention in Practice

So what does this look like in a real database environment?

Imagine a UK-based e-commerce company running a complex data stack feeding everything from product recommendations to fraud detection. An AI observability layer continuously monitors data freshness, schema consistency, row-count trends, and distribution shifts across every pipeline. Overnight, it detects that a supplier data feed has begun delivering records with an unusual proportion of missing SKU attributes not enough to trigger a traditional alert, but a pattern the model recognises as a precursor to downstream catalogue failures.

By 7 AM, the data engineering team has a prioritised notification, a clear diagnosis, and the lineage trace showing exactly where the issue originated. The problem is resolved before the morning’s trading window opens. No customer ever sees an empty product page. No revenue is lost.

That is predictive prevention. Not luck. Not a faster incident response. Prevention.

The Road Ahead

AI data observability is not a future concept it is available, deployable, and delivering measurable ROI for forward-thinking organisations right now. But adoption across UK enterprises remains uneven. Many businesses are still investing in better versions of the same reactive tools that have always let them down.

The organisations that will lead in the next five years are those that recognise data reliability as a strategic asset, not just an IT concern. They are the ones investing not merely in storing and processing data, but in trusting it, knowing that what their systems report is accurate, timely, and complete.

The question is no longer whether AI observability will become the standard for database management. It will. The question is whether your organisation will be ahead of that curve or catching up to it.