When Minutes Cost Millions

Database downtime rarely begins with a dramatic failure. It starts with subtle degradation, rising query latency, thread contention, I/O waits, or replication lag. These early signals are often ignored because systems are still technically “available”. Then a tipping point is reached: connection pools saturate, transactions time out, and the application layer begins to fail.

At that stage, the financial impact escalates rapidly. In high-throughput environments, whether e-commerce, fintech, or SaaS, even a few minutes of downtime can translate into significant revenue loss, SLA penalties, and reputational damage. What makes this more critical is that most of these failures are not unpredictable; they are the result of weak operational discipline and missing technical safeguards.

The Compounding Risk of Inefficient Queries

One of the most common root causes of database instability lies in poorly optimised queries. Full table scans on large datasets, missing or incorrect indexing strategies, and inefficient joins can drastically increase CPU and memory utilisation. Over time, this leads to resource contention, locking issues, and degraded throughput.

For example, a query that performs adequately on a dataset of 100,000 rows can become a major bottleneck at 10 million rows if indexes are not aligned with access patterns. Similarly, excessive use of SELECT * or unbounded queries increases network and memory overhead unnecessarily.

A technically mature approach involves continuous query profiling using tools such as execution plans, cost estimators, and runtime statistics. Indexing strategies should be revisited regularly, including composite indexes, covering indexes, and partition pruning where applicable. Query optimisation is not a one-time activity; it must evolve with data growth and application behaviour.

Designing for High Availability and Fault Tolerance

Performance tuning alone cannot guarantee uptime. Systems must be architected for failure scenarios. High availability (HA) design ensures that database services remain operational even when individual components fail.

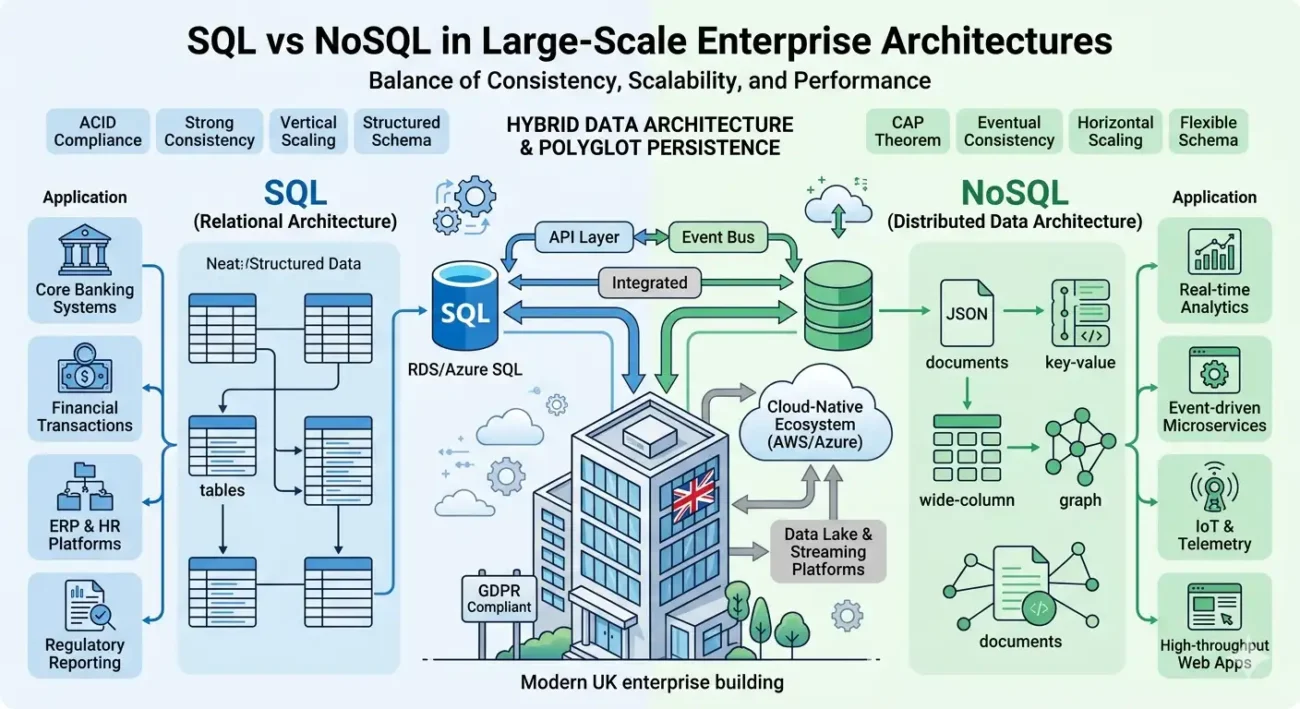

This typically involves primary-replica (master-slave) or multi-primary replication architectures. Synchronous replication offers stronger consistency but can introduce latency, whereas asynchronous replication improves performance but risks data lag. Choosing the correct model depends on workload sensitivity and consistency requirements.

Failover mechanisms must be automated and deterministic. Manual intervention during an outage increases recovery time and introduces human error. Technologies such as clustering, quorum-based systems, and load balancers help ensure seamless failover.

Equally critical is validating failover behaviour through controlled testing. Chaos engineering practices, intentionally introducing failures, can reveal weaknesses in HA configurations before real incidents occur.

Observability Beyond Basic Monitoring

Traditional monitoring focuses on surface-level metrics such as CPU usage, disk space, and uptime. While useful, these metrics are insufficient for preventing outages.

Modern database operations require deep observability. This includes tracking query execution time distributions, lock wait times, buffer cache hit ratios, replication delay, and connection pool utilisation. More advanced setups integrate distributed tracing to understand how database performance impacts application latency end-to-end.

Anomaly detection and threshold-based alerting should be fine-tuned to reduce noise while ensuring early detection of abnormal patterns. For instance, a gradual increase in disk I/O latency or a steady decline in cache hit ratio often precedes major performance incidents.

The objective is to move from reactive troubleshooting to predictive intervention, by identifying and resolving issues before they affect users.

Backup Strategies and Recovery Engineering

Backups are often implemented but rarely engineered. A robust backup strategy must consider not only data protection but also recovery performance.

Full backups, incremental backups, and point-in-time recovery (PITR) mechanisms should be configured based on business requirements. Storage redundancy across regions or availability zones further reduces risk.

However, the defining factor is recovery time. A backup that takes hours to restore is operationally ineffective in a critical outage. Regular recovery drills must be conducted to validate both data integrity and restoration speed.

Transaction log backups and snapshot-based backups should also be monitored for consistency. Silent failures in backup pipelines are more common than expected and often go unnoticed until recovery is attempted.

Capacity Planning and Workload Modelling

Database performance degrades non-linearly as workloads increase. Without proactive capacity planning, systems eventually reach a saturation point where performance drops sharply.

Capacity planning involves analysing historical workload trends, forecasting growth, and modelling future demand. This includes evaluating read/write ratios, peak concurrency levels, and data growth rates.

Vertical scaling (adding more CPU, RAM, or storage) has limits and diminishing returns. Horizontal scaling through sharding, partitioning, or distributed databases requires careful design to avoid introducing complexity and consistency challenges.

Workload isolation is another critical technique. Separating analytical queries from transactional workloads using read replicas or dedicated data warehouses prevents resource contention and ensures consistent performance.

Concurrency Control and Transaction Management

In high-traffic systems, concurrency issues are a major source of instability. Lock contention, deadlocks, and long-running transactions can block critical operations and degrade system throughput.

Choosing the right isolation level is essential. While stricter isolation levels, such as SERIALIZABLE, ensure data consistency, they can significantly reduce concurrency. Conversely, lower isolation levels improve performance but may introduce anomalies.

Techniques such as optimistic concurrency control, row-level locking, and shorter transaction scopes help balance consistency and performance. Monitoring deadlock frequency and analysing transaction logs can provide insights into contention hotspots.

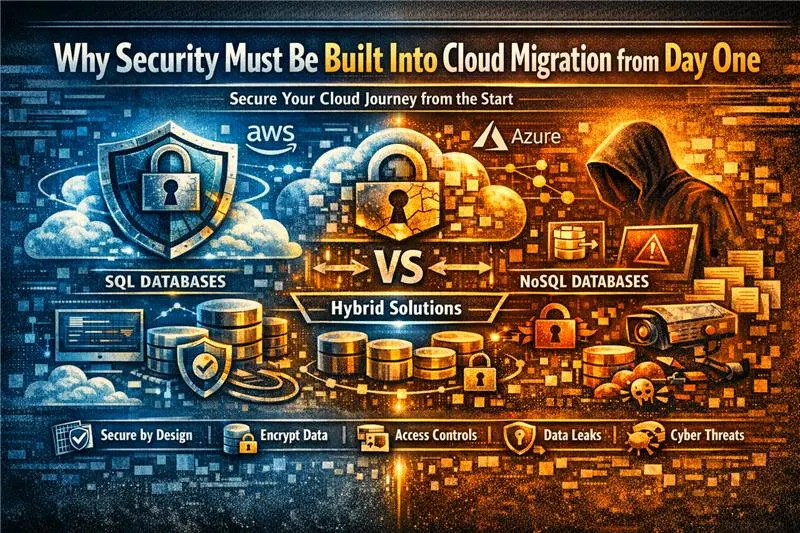

Security as an Operational Stability Factor

Database security is not just about preventing breaches; it directly impacts operational reliability. Uncontrolled access, excessive privileges, and unpatched vulnerabilities can lead to accidental or malicious disruptions.

Implementing role-based access control (RBAC), enforcing least privilege, and regularly auditing access logs are essential practices. Encryptio,n both at rest and in trans, adds a layer of protection without significantly impacting performance when configured correctly.

Patch management must be proactive. Many outages occur not because patches are applied, but because they are delayed, leaving systems exposed to known vulnerabilities or bugs.

Automation and Infrastructure as Code

Manual database management introduces variability and risk. Automation ensures consistency across environments and reduces dependency on individual operators.

Infrastructure as Code (IaC) allows database environments to be provisioned, configured, and scaled programmatically. This ensures that production, staging, and development environments remain consistent.

Automated workflows for backups, failover, scaling, and patching reduce operational overhead and improve reliability. Combined with CI/CD pipelines, database schema changes can be deployed in a controlled and versioned manner, minimising deployment risks.

The Shift from Reactive to Engineering-Led Operations

Ultimately, reducing downtime is not about isolated fixes but about adopting an engineering-led approach to database operations.

This involves integrating performance testing into development cycles, conducting post-incident reviews with root cause analysis, and continuously refining operational processes. Metrics such as Mean Time to Detect (MTTD) and Mean Time to Recover (MTTR) should be tracked and improved over time.

Organisations that treat databases as strategic assets rather than background infrastructure achieve higher reliability, better performance, and lower operational risk.

Conclusion: Engineering Uptime as a Discipline

Downtime is rarely a result of a single failure. It is the cumulative effect of overlooked inefficiencies, weak architecture, and reactive operations.

The transition to uptime is driven by technical discipline, optimised queries, resilient architectures, deep observability, tested recovery strategies, and automated operations. These practices are neither complex nor prohibitively expensive, but they require consistency and expertise.

In a data-driven economy, uptime is not just a technical metric. It is a direct driver of revenue, trust, and competitive advantage. Organisations that understand this don’t just avoid losses, they build systems that scale confidently, perform reliably, and deliver measurable business value.